Message from the Executive Director

2022 was difficult for the UIXP.

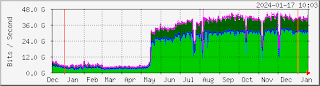

The year started off strong. In January we had 27 networks exchanging 35 Gbps of peak daily traffic. In February we connected our management network to our route servers in order to deliver new low-latency services. In March we deployed a dark fibre link between Raxio and Communications House to facilitate multi-site peering. In May we helped Google extend their global network to Uganda to facilitate the distribution of their traffic via the UIXP.

By June our peak daily traffic reached a record high of 50 Gbps and we planned to start offering free 100M ports – but then a series of external problems destabilized and eventually disabled both Google and Akamai’s peering amid a lingering national Facebook ban. This multifaceted environmental disaster reduced our peak traffic to 6 Gbps and negatively impacted our income immediately after we incurred new costs related to our multi-site expansion.

We are doing our best to help Google and Akamai resolve their issues while we work with Netflix and Meta to deploy new caching systems. We have also assumed a more conservative budgetary posture in an effort to maintain financial stability during this difficult period. We faced an unusually high number of delinquencies at the end of the year and we will be working to address this in the coming months.

In the background, we continue to contribute to community initiatives at the local, regional, and global level. This includes the Uganda Network Operators Group, ISP Association of Uganda, ICT Association of Uganda, the African Network Operators Group, the African Peering & Interconnection Forum, the African IXP Association, and Internet Exchange Federation.

I encourage everyone to read the full report for more details. As always, we are grateful for everyone's support and look forward to working together for the good of the Internet in 2023.

Kyle Spencer,

Executive Director

Raxio Promotional Discounts

As a reminder, significant discounts are available to all Raxio customers including a free cross-connect to the UIXP and a 6-month 25% discount on all exchange services. If you would like to connect, upgrade your port, or learn more about our services, please

contact us.

New Members & Connections

Google (AS 15169), Raxio (AS 328821), Group Vivendi Africa (AS 36924), and the Uganda Internet Exchange Point management network (AS 328998) joined the exchange in 2022. This diverse array of content, enterprise, and access networks are all connected to our new node in the Raxio data center, bringing the total number of peers at that location to five.

Looking ahead, we intend to engage a broader range of Raxio customers in order to expand our membership. The more networks that connect, the more valuable the exchange is to everyone.

Technical Updates

We upgraded our inter-site link to 100 Gbps with geographic redundancy in March 2022 in order to accommodate the anticipated flow of Google traffic between Raxio and Communications House where the majority of our customers are connected. C-Squared is providing this link and we are grateful for their reliable and attentive service.

We experienced multiple broadcast storms in 2022 that significantly disrupted some of our member networks. In response, we are gradually implementing technical changes to reduce the possibility of future disruptions. Most notably, we now restrict all ports to a single MAC address.

As a reminder, we send technical alerts about notable changes and foreseeable outages to the members-only

techies@uixp.co.ug mailing list. If your network is peering but is not a member and would like to be, please

contact us.

Content Networks: Outages & Upcoming Deployments

Four content networks are connected to the UIXP but only

YoTV is actively peering. Facebook, Google, and Akamai have all been disabled by different circumstances detailed below:

- The Facebook cache (AS 63293) stopped serving traffic in January 2021 after a government ban forced network operators to block access to the service. This reduced local bandwidth production by 30% and damaged Uganda’s reputation in the global telecommunications industry. Meanwhile, many users continue to access Facebook via virtual private networks (VPNs) which force network operators to import traffic from more expensive international sources. In other words, the Facebook ban increased the cost of service delivery, reduced overall performance, and steered investment to neighbouring countries which are perceived to have more attractive and stable policy environments.

- Google (AS 15169) established a remote peering session in May 2022 after decommissioning their prototype “self serve” cache (AS 36040). However, Google’s regional transport suppliers were unable to deliver a stable link to Mombasa despite six months of joint troubleshooting. The session was ultimately disabled in December 2022 due to extreme instability and poor quality of service. Google is now working to change transport suppliers and we are actively supporting them with this process.

- Akamai’s cache (AS 20940) stopped serving traffic when Google’s instability began because it forced the cache-fill donor, NITA-U, to re-route Google related user traffic via international links. This overloaded their capacity and the resulting congestion forced the Akamai cache offline. NITA-U is working to upgrade their international capacity but the procurement timeline is uncertain so we are searching for a new donor and may need to implement a paid access model until Akamai can justify paying for the cache-fill themselves.

On a more positive note, Netflix and Meta are shipping us new edge caches which we hope to receive next month. Our goal is to bring them online in the second quarter of this year:

- Meta plans to procure their own cache-fill and will peer with our route servers. Their new system will reportedly make it easier for networks to distinguish between traffic related to Facebook and other Meta properties such as Instagram, Oculus, and WhatsApp.

- Netflix requires a cache-fill donor but their requirements are relatively easy to fulfill due to the static nature of their content catalogue. If your network has 500 Mbps of idle capacity at night and would like to donate it to the UIXP community for this purpose, please contact us to discuss the requirements in more detail.

The

2021 Facebook ban continues to suppress overall utilization of the

Internet and demand for our regional interconnection service. It also

increases the cost of Internet service delivery by forcing network operators to

import Facebook traffic via international VPNs rather than sourcing it

locally from our cache. In general, it makes the Internet more expensive

and constricts Uganda’s commercial gravity relative to neighbouring

telecommunications markets.

We are also concerned by the

high taxes levied on Internet services which now account for over 50% of the

total cost of access. Like the Facebook ban, this also makes the Internet more

expensive, suppresses overall utilization, and reduces demand for our

service.

Financial Challenges

We unfortunately ended the year without any surplus or savings due to an unusually high number of delinquencies. The problem is limited to a minority of networks but the loss of income is significant due to the thin margins of our “cost-recovery plus” sustainability model.

While we are sympathetic to the difficulties that network operators face in our market, it is in everyone’s best interest for everyone to keep their accounts up to date. Income instability impacts our ability to pay suppliers, maintain our infrastructure, and plan for the future.

That aside, our cash reserves remain intact and we remain in good standing with URA despite occasional conflicts caused by erroneous assessments, improper penalties, and onerous bureaucracy.

Community Engagement

UGNOG: The UIXP continues to facilitate donations for the Uganda Network Operators Group (UGNOG) until they can formalize and register a bank account. Any organizations that wish to sponsor UGNOG should notify us by sending an e-mail to accounts@uixp.co.ug CC isabel.odida@gmail.com.

ISPAU & ICTAU: The UIXP is a member of the ISP Association of Uganda (ISPAU) and ICT Association of Uganda (ICTAU) and works through both organizations to promote community development and good policy outcomes for our industry.

AFIX, AfPIF, & AFNOG: Our team remains deeply involved in various voluntary leadership and support roles in the African IXP Association (AFIX), the African Peering and Interconnection Forum (AfPIF), and the African Network Operators Group (AfNOG).